In today’s data‑driven business environment, the quality of your data directly impacts decision-making, operational efficiency, and compliance with regulatory requirements. High‑quality data serves as the foundation for analytics, artificial intelligence, and strategic initiatives across departments. When data is poor, meaning it is inaccurate, inconsistent, or incomplete, organizations pay a steep price in lost revenue, operational inefficiencies, and stalled digital transformation initiatives. According to industry research, poor data quality costs companies millions annually and contributes to failed analytics projects and poor decision-making.

Data quality tools help organizations ensure that their data is reliable and fit for purpose. These tools automate tasks such as data profiling, cleansing, monitoring, and governance, enabling businesses to scale their data quality efforts and reduce reliance on manual processes. Selecting the appropriate tool is essential because not all platforms offer the same capabilities or serve the same audiences. This article ranks the top data quality tools available today, compares their features, and highlights how they support business and technical requirements.

The Business Impact of Data Quality

Organizations increasingly recognize that data quality is more than a technical issue; it shapes the very outcomes they depend on. Recent statistics show that a significant portion of business data is inaccurate or incomplete, leading to poor insights and flawed decision-making. Nearly three‑quarters of organizations report that data quality issues negatively impact their operations, and many data projects fail because of these problems.

Poor data quality also carries a financial toll. Research consistently indicates that businesses can lose millions each year due to errors, duplicates, and inconsistencies within their data. These losses stem from inefficiencies, missed opportunities, compliance penalties, and inaccurate analyses.

![]() Chart: Poor Data Quality Costs Companies Millions Annually

Chart: Poor Data Quality Costs Companies Millions Annually

The financial impact of poor data quality varies by industry, with financial services suffering the highest average annual losses.

Source: Industry estimates and reports (e.g., Gartner, Experian, IBM, 2024–2025).

On the other hand, improvements in data quality through proper tools and governance can significantly reduce operational costs, improve agility, and enhance customer satisfaction. Many data scientists report spending a disproportionate amount of their time on cleaning and preparing data rather than driving insights, highlighting the need for automated and scalable data quality solutions.

Effective data quality tools allow organizations to focus on insight generation rather than manual correction. This shift is particularly vital as companies pursue advanced analytics and AI initiatives that rely on trustworthy data foundations.

What Modern Data Quality Tools Must Deliver

A modern data quality tool must go beyond basic validation to cover a wide range of capabilities that align with business needs and technical complexity. At a minimum, such tools should provide reliable data profiling, which helps organizations understand the structure, patterns, and anomalies in their datasets. They should also include powerful cleansing and standardization features to correct inconsistencies, deduplicate records, and enforce data norms across systems.

Continuous monitoring and alerting are increasingly important, as real‑time data flows into decision systems and analytics platforms. Tools that support ongoing assessment of data quality metrics and trigger automated alerts when issues arise help organizations reduce risk and prevent flawed data from propagating. Integration with metadata repositories and lineage tracking is critical for compliance and audit readiness, enabling businesses to trace the history and usage of data elements across systems.

Finally, accessibility for both technical users and business stakeholders is essential. Tools that allow non‑technical users to define rules or view dashboards reduce dependency on engineering teams and increase adoption across the enterprise. Cloud‑native architectures and seamless integration with data lakes, warehouses, and analytics environments further enhance the relevance of data quality tools in modern data ecosystems.

Top 10 Data Quality Tools

Before diving into the ranked list, it is important to understand the criteria used to evaluate and compare these tools. The rankings reflect a combination of factors, including feature breadth, ease of use for both technical and business users, automation capabilities, governance and compliance support, integration flexibility, and overall impact on business outcomes.

Tools were also assessed for their ability to handle diverse data types, support real-time monitoring, and provide actionable insights that enhance decision-making. While some platforms excel in specialized areas like observability or pipeline validation, others offer comprehensive solutions that span profiling, cleansing, governance, and analytics.

This ranking highlights the tools that stand out for their effectiveness, scalability, and alignment with modern enterprise data quality needs.

1. Tikean

Tikean combines the core capabilities of leading data quality tools with an emphasis on ease of business usability and governance integration. Unlike some platforms that focus primarily on observability, pipeline validation, or engineering workflows, Tikean prioritizes enterprise‑wide adoption by supporting both technical users and business stakeholders in a single platform.

Tikean’s no‑code or low‑code rule editors allow business users to define and adjust data quality rules and various reports without heavy engineering support, reducing bottlenecks and speeding time to value. Integrated dashboards provide real‑time insights into data health, while cross‑system consistency checks ensure data aligns across source systems and analytical platforms. This proactive approach to data quality helps prevent issues before they impact operations or decisions.

Beyond traditional validation, Tikean supports rich data governance capabilities, including lineage tracking, role‑based access control, and customizable governance frameworks tailored to specific business needs. By uniting quality, governance, and business accessibility, Tikean enables organizations to derive reliable insights faster and with greater confidence.

A large financial services firm, for example, might use Tikean to consolidate customer data from multiple systems, automatically detect inconsistencies, and enforce standardized rules, resulting in reduced customer onboarding time and improved reporting accuracy across teams. This level of operational improvement underscores how business‑oriented design paired with robust capabilities can transform data quality programs.

2. Great Expectations

Great Expectations is a popular open‑source data validation framework that allows teams to define expectations or tests against datasets. Its extensibility and flexibility make it appealing for engineering teams that want deep control over rules and validations without the licensing costs of commercial tools. It integrates with data pipelines and supports automated checks that help catch errors early.

However, its developer‑centric nature means it may not be the best fit for broader business user adoption. Teams without engineering expertise may struggle to configure and scale it without additional tooling or wrappers. Nevertheless, for organizations with strong data engineering practices, Great Expectations provides a highly customizable and transparent validation framework.

3. Monte Carlo

Monte Carlo has emerged as a leader in data observability, combining lineage tracking with advanced monitoring and alerting capabilities. It enables teams to understand how data flows through their infrastructure and spot issues caused by upstream changes before they affect downstream analytics and applications. Its focus on observability reflects an industry trend toward understanding not just what quality issues exist but where and why they occur.

While Monte Carlo’s strength in observability is clear, it may be more reactive than preventative, focusing on detecting issues after they arise rather than preventing them through built‑in governance frameworks. This makes it excellent for operational reliability but less comprehensive as a full quality program for organizations seeking governance and business user accessibility.

4. Ataccama ONE

Ataccama ONE is an AI‑assisted data quality and governance platform that automates profiling, anomaly detection, and cleansing across complex data landscapes. The platform’s self‑driving capabilities suggest next‑generation automation, reducing manual oversight and empowering teams to focus on strategic data use.

This automation and predictive insight set Ataccama apart, particularly for organizations seeking to amplify their quality efforts with intelligent tooling. It supports metadata, lineage, and business glossaries that unify quality and governance across the enterprise. While powerful, it may be best suited to organizations with mature data operations that can fully leverage its advanced feature set.

5. Informatica Data Quality

Informatica Data Quality is a comprehensive solution within Informatica’s broader platform, combining profiling, cleansing, validation, and governance features. It embeds AI‑assisted intelligence into its workflows and allows reusable rule libraries and accelerators to streamline common tasks. Its strong governance integrations help organizations build quality programs that span multiple systems and data domains.

The platform’s enterprise focus, however, comes with higher implementation and licensing costs, which can be a barrier for smaller organizations. It also tends to require experienced data management practitioners to configure and maintain effectively. For companies that need deep integration with governance and cataloging while scaling across complex environments, Informatica remains a leading option.

6. Talend Data Quality

Talend Data Quality offers strong profiling, cleansing, transformation, and enrichment features, supported by a flexible and extensible platform. It integrates seamlessly with Talend’s broader data integration and management ecosystem, making it appealing to organizations already using Talend technologies. Talend supports a wide range of data types and sources, which enhances its flexibility across diverse environments.

Talend’s visual interface and rule editors make it more accessible than some legacy enterprise tools, although it still requires technical understanding to configure advanced workflows. Organizations with distributed data environments or those looking for a balance between power and flexibility often find Talend a compelling choice. Its scalability and integration capabilities allow it to support both operational and analytical data quality needs.

7. Bigeye

Bigeye is a data observability platform that places heavy emphasis on real‑time monitoring and anomaly detection. Its strength lies in identifying unusual patterns and deviations in data streams, allowing teams to react quickly before issues cascade into reporting or operational systems. Bigeye’s alerting mechanisms and dashboards support rapid visibility into data health, which is particularly valuable in dynamic data environments.

However, Bigeye’s focus on observability means it may not offer as comprehensive a suite of cleansing or governance tools as other platforms. It is best suited to organizations looking to enhance visibility and responsiveness rather than transform their entire data quality and governance practice. In environments where continuous pipeline health is mission‑critical, Bigeye provides real‑time confidence and early warning of quality degradation.

8. Datafold

Datafold differentiates itself with a focus on analytics reliability and change impact analysis. It excels at validating data transformations, detecting anomalies in data pipelines, and ensuring that changes to data models do not unintentionally introduce errors. Datafold’s insights help data teams maintain confidence in their analytics environments and reduce bugs caused by evolving pipelines.

This focus makes Datafold particularly useful for data engineering teams working with complex pipelines. It may not provide the full suite of governance and business user features found in broader data quality platforms, which can limit its appeal for organizations seeking a one‑stop solution. Still, its emphasis on reliability makes it a strong option for teams prioritizing analytics accuracy and pipeline stability.

9. SAS Data Quality

SAS Data Quality is part of the broader SAS analytics ecosystem and provides advanced capabilities for data cleansing, validation, and transformation. It is particularly valuable in industries such as banking, insurance, and healthcare, where regulatory compliance and analytical precision are non‑negotiable. SAS’s strong analytical heritage enables deep rule configuration and comprehensive profiling that encounters issues early in the data lifecycle.

However, as with other enterprise solutions, SAS Data Quality can be cost‑intensive and may require specialized training to leverage its full feature set. Its implementation is more intensive than lightweight or cloud‑native alternatives, and smaller organizations may find it more than they need. Organizations with strong SAS usage for analytics often find it a natural fit, while those seeking a broader, business‑accessible data quality platform may look elsewhere.

10. IBM InfoSphere QualityStage

IBM InfoSphere QualityStage is a long‑standing enterprise data quality solution known for its robust cleansing and standardization capabilities. It handles large volumes of data and supports complex matching and validation rules, making it suitable for organizations with extensive legacy environments or regulatory requirements. QualityStage integrates with IBM’s broader InfoSphere suite, enabling metadata sharing and governance linkages that strengthen enterprise data management.

Despite its strengths, InfoSphere QualityStage requires significant expertise to deploy and configure effectively. The platform’s complexity and depth can pose challenges for smaller teams or organizations without dedicated data management resources. Its interface and workflows may feel dated compared with newer, cloud‑native solutions that emphasize usability and automation. Because of its enterprise orientation, QualityStage is best suited to large organizations with mature data governance practices and substantial technical capacity.

Comparing Data Quality Tools by Capability

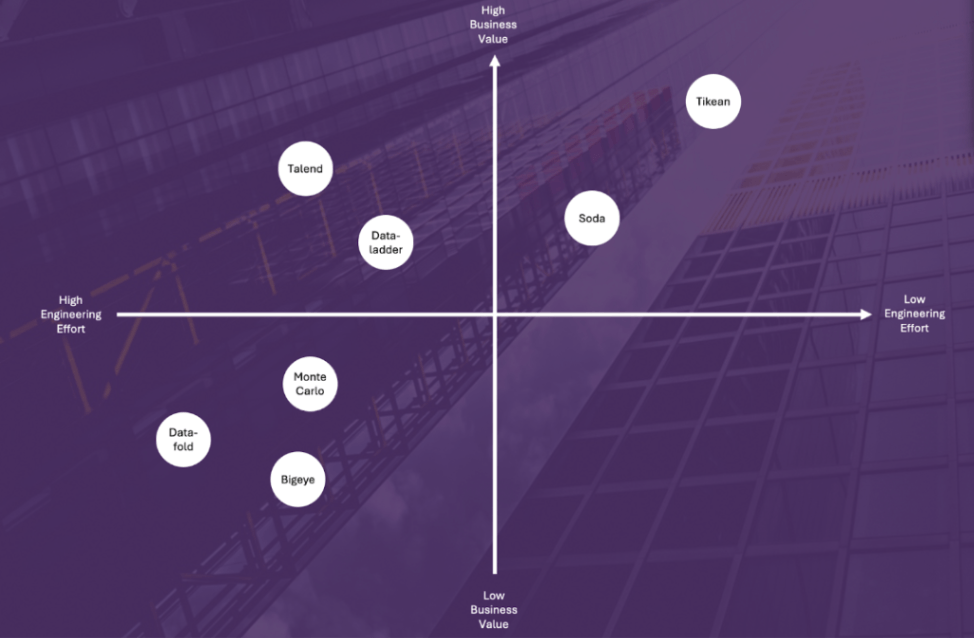

While each tool has strengths, they differ significantly across key capability areas. Some prioritize real‑time monitoring and observability, making them ideal for analytics reliability. Others emphasize governance and cleansing capabilities suited for compliance and operational programs. Open‑source tools offer flexibility and customization but may lack business user accessibility, whereas enterprise platforms deliver broader coverage at the cost of higher implementation effort and expense.

A compact comparison table or scoring matrix can help decision makers weigh these dimensions quickly and align them with organizational goals. Factors such as ease of use, automation level, governance support, engineering effort, business value, and scalability should inform any purchase decision to ensure the chosen tool matches both current needs and future growth.

How to Choose the Right Data Quality Tool

How to Choose the Right Data Quality Tool

Selecting the right data quality tool requires clarity on business priorities, technical capacity, and team maturity. Organizations with strong engineering resources and a focus on custom validation may opt for open‑source frameworks like Great Expectations, while larger enterprises with complex governance requirements may prefer platforms like Informatica or Ataccama. Tools focused on observability, such as Monte Carlo or Bigeye, are excellent for environments where pipeline stability and real‑time detection are paramount.

Enterprises embarking on digital transformation should also consider long‑term scalability, integration with existing data ecosystems, and business user adoption. Tools that empower both technical and non‑technical stakeholders help distribute ownership of data quality and foster a culture where trusted data becomes a strategic asset rather than a persistent headache.

Best Practices for Implementing Data Quality Tools

Implementing data quality tools effectively goes beyond technology selection. Start by identifying business‑critical data domains and defining clear ownership for data quality outcomes. Establish automated processes for profiling, cleansing, and monitoring, and align quality metrics with business KPIs to demonstrate value. Organizations should iterate on rules and checks over time, incorporating feedback from users and adapting as data needs evolve. Treating data quality as a continuous discipline rather than a one‑time project ensures that insights remain reliable and that investments deliver sustained impact.

Conclusion

High‑quality data is vital to maintaining a competitive edge in the digital economy. Data quality tools help organizations move from reactive data cleanup toward proactive assurance, enabling better decisions and greater confidence in analytics and AI initiatives. By understanding the strengths and trade‑offs of the top tools and aligning them with business objectives, organizations can build robust data quality programs that support operational success and strategic growth. Tikean’s balanced approach to usability, governance, and automation positions it as a strong choice for enterprises seeking a future‑ready data quality solution tailored to both business and technical users.

FAQ

What are the key metrics to measure data quality?

Key data quality metrics include accuracy, completeness, consistency, timeliness, and uniqueness. Accuracy ensures the data correctly reflects real-world entities. Completeness measures whether all required information is captured. Consistency verifies uniformity across systems. Timeliness assesses whether data is up-to-date, and uniqueness ensures no duplicates exist. Monitoring these metrics helps organizations maintain reliable, actionable data.

How do data quality tools improve business decision-making?

Data quality tools automate profiling, cleansing, validation, and monitoring of data, ensuring that decisions are based on accurate, consistent, and complete information. High-quality data reduces errors in analytics, AI predictions, and operational reporting, helping organizations make timely, confident decisions, improve customer experiences, and minimize costs associated with incorrect or inconsistent data.

Can small and medium-sized businesses benefit from data quality tools?

Yes. While many data quality tools were originally designed for large enterprises, modern platforms like Tikean offer no-code interfaces, automation, and cloud integration suitable for SMEs. These tools enable smaller organizations to maintain high data standards without requiring extensive technical expertise or large IT teams, helping them compete effectively using reliable data insights.

What differentiates Tikean from other data quality tools?

Tikean combines robust data quality, governance, and automation features with a user-friendly, no-code interface accessible to both business and technical users. Its real-time validation, cross-system consistency checks, predictive insights, and advanced integrations with AI, IoT, and RPA differentiate it from developer-centric or observability-only platforms, making it a comprehensive solution for enterprise-wide adoption.

How should an organization choose the right data quality tool?

Selecting the right tool depends on business priorities, technical capacity, data complexity, and team maturity. Organizations should evaluate platforms based on usability for business stakeholders, automation capabilities, governance support, integration flexibility, and scalability. It is important to match the tool to current needs while ensuring it can adapt to evolving data strategies and business growth.